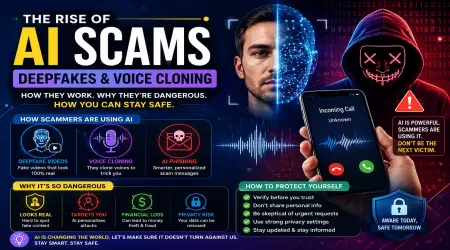

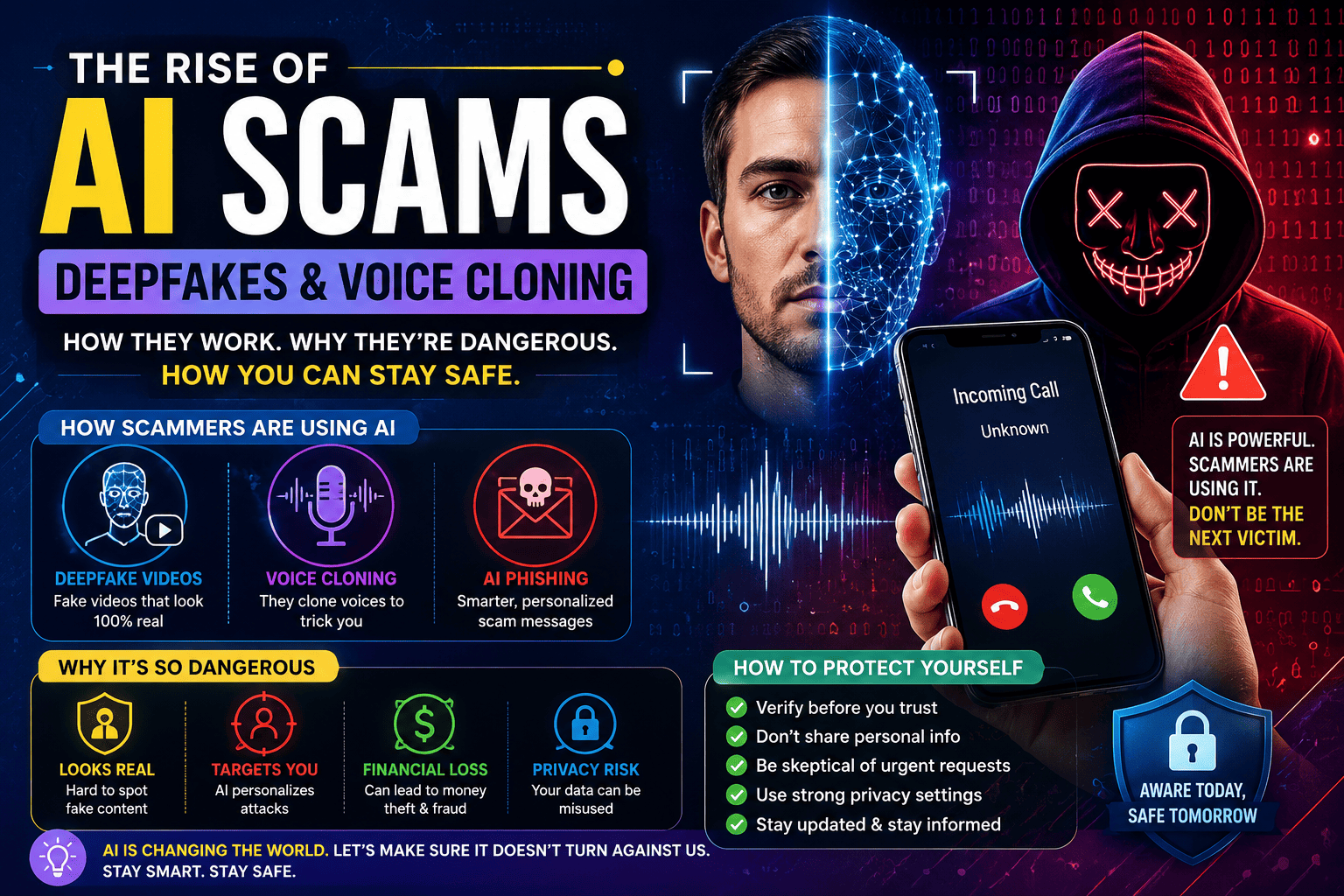

🚨 The Rise of AI Scams: How Fake Voices, Deepfakes, and Smart Fraud Are Fooling Millions

🌐 Artificial Intelligence is changing the world — but scammers are using it too.

A few years ago, online scams were relatively easy to recognize.

You could usually spot suspicious emails by poor grammar, fake promises, or strange-looking websites. Scam phone calls often sounded robotic or unprofessional. Most people learned to ignore them.

But things are changing rapidly.

Today’s scammers are smarter, faster, and more convincing than ever before — largely because of Artificial Intelligence.

AI tools can now clone human voices, generate realistic videos, write professional messages, imitate real people, and create fake identities within minutes. What once required professional hacking skills can now be done with simple AI software available online.

This new wave of AI-powered fraud is becoming one of the biggest digital threats facing ordinary people.

From fake job offers and cloned family voices to deepfake celebrity scams and AI-generated phishing emails, cybercriminals are using technology in ways that many people are still not prepared for.

The scary part?

These scams often look completely real.

🤖 Why AI Has Made Scams More Dangerous

Artificial Intelligence itself is not the problem.

In fact, AI is helping improve healthcare, education, customer service, productivity, and scientific research.

The issue is that scammers have discovered how powerful these tools can be when used for manipulation.

Traditionally, scammers relied on sending thousands of poorly written emails hoping a few people would fall for them.

AI has changed that completely.

Modern AI systems can:

- ✉️ Write highly convincing emails

- 🎙️ Clone voices from short audio clips

- 🎥 Create realistic deepfake videos

- 💬 Generate human-like conversations

- 🌍 Translate scams into multiple languages instantly

- 📱 Personalize attacks using public social media information

This means scams no longer feel “fake.”

They feel personal.

And that makes them far more dangerous.

🎭 The Terrifying Growth of Deepfake Scams

One of the most alarming developments is the rise of deepfake technology.

Deepfakes use AI to create realistic fake videos or audio recordings that imitate real people.

At first, deepfakes were mostly internet entertainment.

People used them to swap celebrity faces in videos or create funny online content.

Now criminals are using the same technology for fraud.

Imagine receiving a video call that appears to show your boss asking for an urgent bank transfer.

Or hearing your child’s voice calling for help.

These situations sound unbelievable — but they are already happening.

In several reported cases worldwide, scammers used AI-generated voices to imitate company executives and trick employees into transferring large amounts of money.

Because the voices sounded authentic, victims believed they were speaking to real people.

Deepfake technology is improving so quickly that many fake videos are becoming extremely difficult to detect with the human eye.

📞 Voice Cloning Is Becoming Frighteningly Easy

Perhaps the most emotionally dangerous AI scam is voice cloning.

Modern AI tools can copy someone’s voice using just a few seconds of recorded audio.

That audio can often be taken from:

- TikTok videos

- Instagram reels

- YouTube content

- WhatsApp voice notes

- Public interviews

- Podcasts

Once scammers collect enough audio, they can generate fake conversations that sound shockingly realistic.

Parents have already reported receiving calls that sounded exactly like their children begging for emergency money.

In many cases, victims panic before thinking logically.

That emotional reaction is exactly what scammers want.

Fear, urgency, and confusion are powerful psychological tools.

AI simply makes them more believable.

📧 AI Phishing Emails Are Harder to Detect

Older phishing emails were often full of spelling mistakes and awkward sentences.

Today, AI can generate polished professional emails within seconds.

These messages can imitate:

- Banks

- Delivery companies

- Government offices

- Employers

- Streaming platforms

- Online stores

Some scammers even personalize messages using information collected from social media profiles.

For example, if someone posts about traveling, scammers may send fake hotel confirmations or airline alerts.

If someone shares a new job update, they may receive fake HR-related emails.

This level of personalization makes scams feel legitimate.

And because AI writes naturally, traditional warning signs are becoming less obvious.

🧠 Why Smart People Still Fall for Scams

Many people believe scams only target the careless or inexperienced.

That is not true.

Modern scams are designed around psychology, not intelligence.

Scammers understand human emotions extremely well.

They create urgency.

They create fear.

They create excitement.

And when emotions become strong, logical thinking becomes weaker.

For example:

- A fake banking alert creates panic

- A fake investment opportunity creates greed

- A cloned family voice creates fear

- A fake romance profile creates emotional attachment

AI allows scammers to manipulate these emotions more effectively than ever before.

Even highly educated professionals have fallen victim to sophisticated cyber fraud.

🌍 Social Media Is Fueling AI Fraud

Social media platforms unintentionally provide scammers with enormous amounts of personal data.

Every public photo, voice clip, birthday post, workplace update, or travel story can potentially be used to build targeted scams.

People often underestimate how much information they share online.

A scammer does not need to hack your account to learn about you.

Sometimes your public profile already reveals enough.

This is especially dangerous when combined with AI.

A criminal can collect your photos, voice samples, interests, relationships, and communication style — then use AI tools to imitate you.

The result is highly personalized fraud that feels disturbingly real.

🔐 How to Protect Yourself From AI Scams

The good news is that awareness remains one of the strongest defenses.

Most scams still depend on emotional reactions and rushed decisions.

Slowing down can prevent many attacks.

✅ Verify Before You Trust

If someone requests urgent money, sensitive information, or account access, verify their identity independently.

Call them directly using a trusted number.

Do not rely solely on voice messages, emails, or video calls.

✅ Be Careful What You Share Publicly

Limit public access to personal information, especially voice recordings and private family details.

The less data scammers have, the harder it becomes to create convincing AI impersonations.

✅ Use Strong Security Practices

Enable two-factor authentication on important accounts.

Use strong unique passwords.

Keep devices and apps updated regularly.

Basic cybersecurity habits still matter enormously.

✅ Watch for Emotional Manipulation

Scammers often try to create urgency.

If something feels emotionally overwhelming, pause before reacting.

Taking even a few minutes to verify information can stop a scam completely.

🏢 Companies Are Also Becoming Major Targets

AI scams are not limited to ordinary individuals.

Businesses are increasingly under attack as well.

Cybercriminals now use AI to:

- Mimic executives

- Generate fake invoices

- Create fake meeting invitations

- Produce realistic phishing campaigns

- Automate social engineering attacks

Large companies lose billions of dollars every year because of digital fraud.

As AI tools become more accessible, experts believe these attacks may increase dramatically.

This is forcing businesses to rethink cybersecurity training and identity verification systems.

Many organizations are now teaching employees to verify sensitive requests through multiple channels rather than trusting emails or voice calls alone.

⚡ The Future of AI Fraud Could Become Even More Advanced

The technology behind AI continues evolving at incredible speed.

Future scams may become even more personalized and realistic.

Experts predict criminals could eventually create fully AI-generated virtual identities that interact naturally in video calls, customer support chats, and social media platforms.

Some researchers also worry about real-time deepfake conversations becoming harder to detect.

This creates serious concerns not only for cybersecurity but also for politics, media, and public trust.

If fake videos and voices become impossible to distinguish from reality, misinformation could spread more easily than ever before.

That is why governments, technology companies, and cybersecurity experts are racing to develop AI detection tools and digital verification systems.

But the battle between security and fraud is likely to continue for years.

💡 Why Digital Awareness Matters More Than Ever

The internet has made life easier in countless ways.

But it has also created new risks.

AI scams represent a major shift because they combine advanced technology with human psychology.

The result is fraud that feels increasingly believable.

In the past, people worried about computer viruses and hacked passwords.

Now they must also worry about fake voices, fake videos, fake identities, and AI-generated deception.

Digital awareness is no longer optional.

It is becoming an essential life skill.

Parents, students, professionals, and businesses all need to understand how modern scams operate.

The more people understand these threats, the harder it becomes for scammers to succeed.

✅ Conclusion

Artificial Intelligence is one of the most powerful technologies ever created.

It has the potential to improve industries, solve complex problems, and transform everyday life.

But like any powerful tool, it can also be abused.

AI-powered scams are growing rapidly because they exploit something deeply human — trust.

By cloning voices, generating deepfake videos, writing convincing messages, and personalizing attacks, scammers are creating fraud that feels more real than ever before.

The solution is not fear.

The solution is awareness, critical thinking, and smarter digital habits.

As technology continues evolving, staying informed may become one of the most important forms of online protection.

Because in the age of AI, seeing is no longer always believing.